STEREOSCOPIC 3D

FOREWORD

It has been a while since I wrote the article about 3D Cinema. At the time I wrote the page through the eyes of a cinema and as a part time projectionist. Watching the industry from the front end as it were, such as adopting capable 3D technology, the cost implications involved and the cost of following the trend, or not. It seems most cinemas have invested, offering at least one screen 3D. However I still see the independents struggling because of these investments which to me is a shame as I want them to survive. I also have to confess they have my heart and not with the unappealing generic chains, sorry.

3D Cinema certainly made an impact but not as big as I originally thought or expected. Instead of using the nature of 3D to enhance the storytelling, I feel the industry cynically used it to add a premium to the already expensive cinema seat. Sadly it seems films are not being shot in 3D just converted in post, which I feel loses many of the tools that can be used with 3D cameras. I believe all of the main television manufacturers no longer even offer a TV with 3D. I confess, both of my televisions are 3D and I love the format.

How 3D Works: To see a 3D image effectively in a 2D plane (on your HDTV), you need a way to show your eyes slightly different images - that's how you "trick" your brain into perceiving depth. The easiest way to do this is to wear glasses that present a different image to each eye. In the cinema we use “passive” glasses which are like a pair of specially designed polarized sunglasses. Unlike sunglasses though, which are designed to block light equally from both eyes, polarized 3D glasses block different kinds of light from each eye, creating the illusion of depth.

For the home television market on the whole we use active-shutter glasses. They are small LCD screens which alternately dim the left and right "lenses" in succession. They rely on an infrared signal emitter in the TV that tells each pair of glasses when they should dim each lens, so each eye can see the image intended for it. Since active-shutter glasses are fairly complicated electronics, they're pricey and only work on 3D TVs made by the same manufacturer.

Passive 3D glasses, on the other hand, are sort of like a pair of specially designed polarized sunglasses, like the cinema – they are lighter and cheaper. That means you don't need any kind of expensive, delicate electronics in the glasses themselves. Nor do you need a proprietary infrared emitter to sync with the glasses but since each lens is blocking out light, you're technically not getting a full 1080p image for each eye, though your brain should be perceiving a 1080p image when it puts the two together.

My Verdit - Passive 3D Wins:

Even though active-shutter glasses should produce a better image, having used my eyes as my career, I find that using active glasses for a prolonged period hurt maybe it’s because I wear prescription glasses as well? I personally can see the active shuttering in action, which also may not help? However the quality of the viewing platform plays a huge part in the experience as well as the type of 3D-glasses tech the TV employs. While passive 3D tech is at a disadvantage for image quality presently, it can nonetheless create a better-looking overall image than an active-shutter set that just doesn't get it quite right. Unless other active-shutter manufacturers step up their game significantly in price and ease of use, I personally think passive 3D is the way to go.

As I mentioned most 3D content is shot for the Cinema and then transferred to Blu-Ray for home viewing. Something I have noticed which annoys me is focal issues on the small screen (parallax), where the two images cause ghosting or interlaced edges. Not even adjusting the 3D in the set itself can cure these anomalies – trust me I have tried. It is a Z depth issue which may need adjustment between the two platforms (cinema and home viewing)?

3D FOR BROADCAST

You may have noticed that many high profile events (in the UK) have been transmitted in 3D in the past. Now it seems they are being dropped in favour of UHD (4K). I still maintain whatever is being broadcast, 3D or 4K, the content has to be good. WHich is why I really believe it never was given the chance to take off. I often wondered how much of an addition 3D made to the experience of ‘Last Night at the Proms’? However I understood they had to test the broadcast route somewhere and it was a bit of fun, even if the conductor could never truly poke you in the eye.

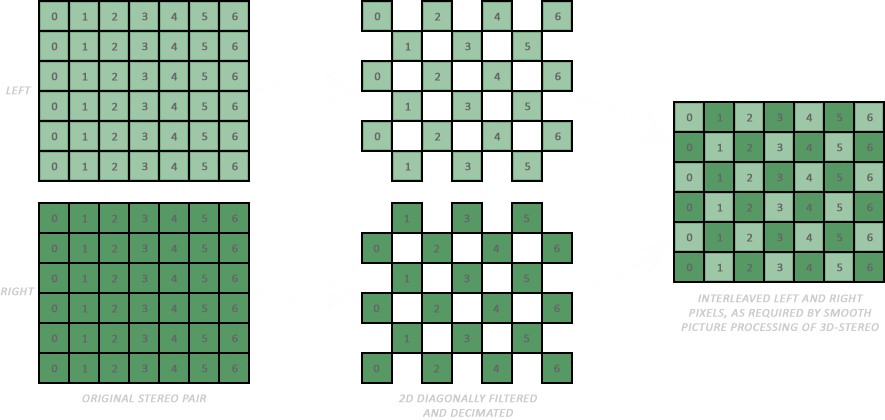

Broadcast: A pair of matched cameras (not specialised), typically spaced at roughly eye distance, is used to capture the image. The horizontal offset produces a binocular disparity (the difference in image location, of an object seen by the left and right). The disparity, together with other information in a scene; the size of objects, sharpness, shadows and relative motion is processed by the mind to create depth perception. Presently transmitting two full quality Stereo3D HD signals, one each for the left and right eye, is impractical because it uses significant bandwidth and risks the two signals picking up unwanted artefacts. There are several schemes that aim to remove this including Side By Side, Checker Board and Stereo3D specific compression.

Side by Side: In ‘Side by Side’ a single signal is created that ‘squashes’ the left and right eye into a single picture. The signal travels as a single stream and is expanded by the viewing device, which means there is no possibility of two signals slipping out of sync. There is no need for extra transmission bandwidth compared to a conventional signal; however the raw signal itself produces a side by side image on a conventional TV.

Chequerboard: In ‘Checkerboard’, each eye is placed in a single frame rather like a chessboard with white spaces as one eye and black as the other, with this methodology there is no possibility of two signals getting out of sync and no extra bandwidth is needed.

3D Compression: I have to admit I do not know a huge amount about 3D compression for broadcast (in fact very little). ‘Stereo3D compression’ includes a variety of possible schemes that take advantage of the similarity between the captured left and right images to intelligently send only needed data, like mpeg coding. The downside at present is using two (in effect different) clips of non Stereo3D compression is very risky to use because of the possibility of introducing concatenation or compression artefacts differences between the eyes. Good Stereo3D compression schemes are more than likely to find favour because they will ultimately be able to produce a matched stable image between the two sources, which in turn will be small enough to allow the data to squeeze down an existing broadcast pipe.

For a 3D presentation to be captured and screened, the post production route has changed the Digital Intermediate realm – the most obvious reasoning is that you have doubled the amount of captured material, which certainly adds to the logistics of a DI 3D project. Then you have to add effects in 3D (left eye and right eye rendering) which has to be composited successfully so the 3D dimensional effect does not break.

The biggest problem for producing 3D is time and the investment for hardware and systems. As well as removing any stereo or non stereo image errors, conflicts between cameras. A critical role for post production is to create stereo that is comfortable to watch over extended periods of time. That means handling the z-space information on a shot so that it is technically and artistically correct and also handling z-space over a sequence of shots, so that the eyes can comfortably adjust.

Issues in Stereo 3D Post

Like any production, 3D post production is about getting the workflow right. Stereo 3D doubles the data load, with two streams and demands that the two are handled in sync and in real time. Something I highly recommend in 3D is the ‘fix it in post’ mentality should be left at the door. Don’t expect post production to get you out of trouble – the adage you can’t polish a turd is doubly more relevant in 3D. Fantastic 3D on screen is a combination of great shooting and even greater and thorough post production.

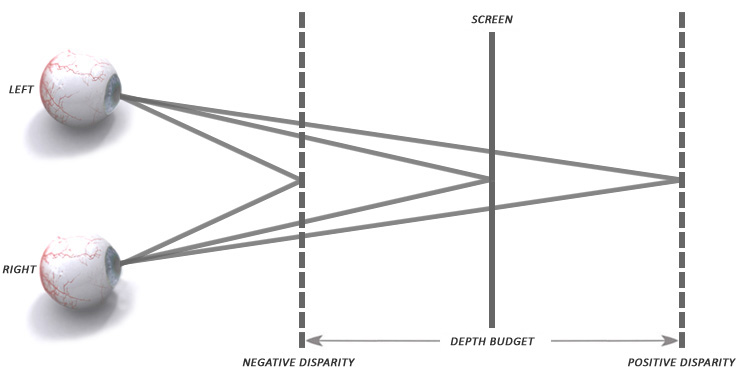

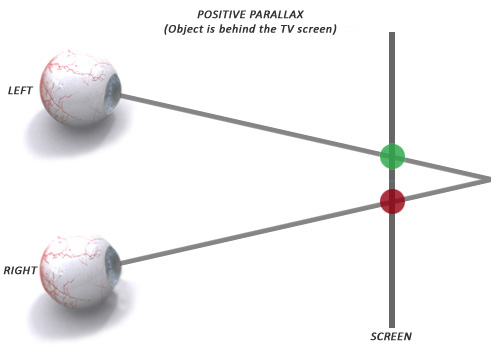

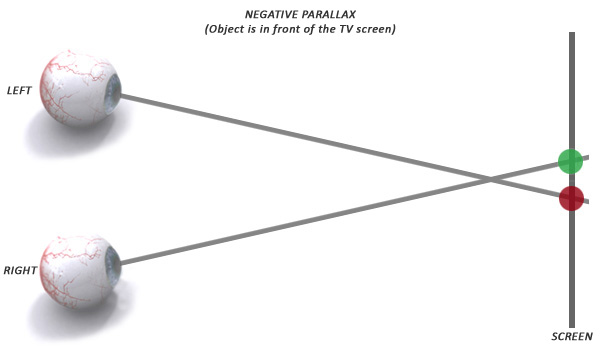

Eye Strain: The Depth Budget, another Stereo3D term to learn, describes the limits for negative parallax (in front of the screen plane) and positive parallax (behind the screen plane). I call it bodybuilding for the eyes. Keeping within tolerance will ensure that eye strain is kept to a minimum – remember, watching a movie has a long duration.

The depth budget is the maximum amount of 3D depth in-front and behind the physical display surface that it is recommended to use for a specific 3D display. If the depth budget is exceeded then viewers will find it increasingly uncomfortable to view a stereoscopic image, and for larger values they will see a double image (ghosting). The aim of defining a depth budget is to provide content creators a concept of the working volume they can use on each display. Which is why some material looks great in the cinema, not so good on you 3D TV at home.

The depth budget is defined in mm of perceived depth, measured from the display surface, and provides a precise target for the content creator to work within. The limits can be different in-front and behind the display. The size of the depth budget is strongly dependent on viewing distance, the further you are from a display the higher the depth budget can be. This is because the human vision system responds to stereoscopic images differently at different viewing distances from the physical display surface.

The Depth Pass: During the Digital Intermediate, yet another job for a Stereo3D production will be a Depth Pass, which involves manipulating the point of interest in Z-space. This has been come to be known as Depth Balancing or Depth Grading. This is the stage in the post production process where the need for full quality and real time playback are vital for producing good, watchable stereo in the shortest possible time.

This may mean an additional deliverable will need to be struck from the master timeline depending on whether the finished piece is intended for the big screen or front room TV viewing but it is yet another deliverable to be thought about.

Edge Violations also known as ‘Breaking the Frame’ need to be avoided or fixed. When objects that are positioned in front of the screen (in negative parallax) suddenly disappear when they pass out of the sides, top or bottom of the screen, this spoils the 3D illusion, so care has to be taken to avoid this wherever possible. This problem is much less of a problem when watching Stereo3D on a large cinema screen as it is much larger and the ‘edges’ are lost in your peripheral vision.

![]()

On the big screen, too much positive parallax causes the viewers eyes to diverge (bodybuilding for the eyes) which is not something they do naturally. This does not happen on a TV sized screen because it is not big enough. This problem is much harder to fix in post, and once again reinforces the higher production values in shooting the material right in the first place, remember your depth budget.

Stereo 3D is another fantastic tool for filmmakers, it is still quite complicated to achieve the workflow throughout post but the right tools for the job are essential through the Digital Intermediate pipeline.